Amazon Redshift vs Apache Spark

June 03, 2023 | Author: Michael Stromann

12

Amazon Redshift is a fast, fully managed, petabyte-scale data warehouse service that makes it simple and cost-effective to efficiently analyze all your data using your existing business intelligence tools. You can start small for just $0.25 per hour with no commitments or upfront costs and scale to a petabyte or more for $1,000 per terabyte per year, less than a tenth of most other data warehousing solutions.

See also:

Top 10 Big Data platforms

Top 10 Big Data platforms

Amazon Redshift and Apache Spark are both powerful big data processing technologies, but they differ in their architecture, use cases, and performance characteristics.

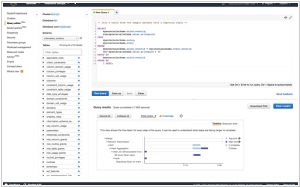

Amazon Redshift is a fully managed data warehousing solution that is optimized for online analytical processing (OLAP) workloads. It is designed to efficiently process and analyze large volumes of structured data. Redshift uses columnar storage and parallel query execution to deliver fast query performance, making it ideal for data warehousing and business intelligence applications. It integrates well with other AWS services and offers scalability and ease of use with automatic backups, high availability, and easy data loading capabilities.

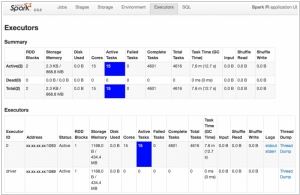

On the other hand, Apache Spark is a versatile and distributed computing framework that can handle a wide range of data processing tasks. It is designed for in-memory data processing and offers support for both batch processing and real-time streaming analytics. Spark provides a unified programming model and supports multiple programming languages, enabling developers to build complex data processing workflows. It also includes libraries for machine learning, graph processing, and stream processing, making it suitable for a variety of use cases.

See also: Top 10 Big Data platforms

Amazon Redshift is a fully managed data warehousing solution that is optimized for online analytical processing (OLAP) workloads. It is designed to efficiently process and analyze large volumes of structured data. Redshift uses columnar storage and parallel query execution to deliver fast query performance, making it ideal for data warehousing and business intelligence applications. It integrates well with other AWS services and offers scalability and ease of use with automatic backups, high availability, and easy data loading capabilities.

On the other hand, Apache Spark is a versatile and distributed computing framework that can handle a wide range of data processing tasks. It is designed for in-memory data processing and offers support for both batch processing and real-time streaming analytics. Spark provides a unified programming model and supports multiple programming languages, enabling developers to build complex data processing workflows. It also includes libraries for machine learning, graph processing, and stream processing, making it suitable for a variety of use cases.

See also: Top 10 Big Data platforms

Amazon Redshift vs Apache Spark in our news:

2015. IBM bets on big data Apache Spark project

IBM has made a significant announcement regarding its involvement in the open source big data project Apache Spark. The company plans to allocate a team of 3,500 researchers to this initiative. Additionally, IBM has unveiled its decision to open source its own IBM SystemML machine learning technology. These strategic moves are aimed at positioning IBM as a frontrunner in the domains of big data and machine learning. Cloud, big data, analytics, and security form the pillars of IBM's transformation strategy. In conjunction with this announcement, IBM has committed to integrating Spark into its core analytics products and partnering with Databricks, the commercial entity established to support the open source Spark project. IBM's participation in these endeavors goes beyond mere altruism. By actively engaging with the open source community, IBM aims to establish itself as a trusted contributor in the realm of big data. This, in turn, enhances its credibility among companies working on big data and machine learning projects using open source tools. The collaborative involvement with the community opens doors for IBM to offer consulting services and seize other business opportunities in this space.

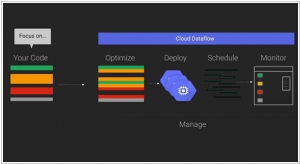

2015. Google partners with Cloudera to bring Cloud Dataflow to Apache Spark

Google has announced a collaboration with Cloudera, the Hadoop specialists, to integrate its Cloud Dataflow programming model into Apache's Spark data processing engine. By bringing Cloud Dataflow to Spark, developers gain the ability to create and monitor data processing pipelines without the need to manage the underlying data processing cluster. This service originated from Google's internal tools for processing large datasets at a massive scale on the internet. However, not all data processing tasks are identical, and sometimes it becomes necessary to run tasks in different environments such as the cloud, on-premises, or on various processing engines. With Cloud Dataflow, data analysts can utilize the same system to create pipelines, regardless of the underlying architecture they choose to deploy them on.