Amazon Web Services vs Microsoft Azure

August 19, 2023 | Author: Michael Stromann

26

Access a reliable, on-demand infrastructure to power your applications, from hosted internal applications to SaaS offerings. Scale to meet your application demands, whether one server or a large cluster. Leverage scalable database solutions. Utilize cost-effective solutions for storing and retrieving any amount of data, any time, anywhere.

21

Microsoft Azure is an open and flexible cloud platform that enables you to quickly build, deploy and manage applications across a global network of Microsoft-managed datacenters. You can build applications using any alternative language, tool or framework. And you can integrate your public cloud applications with your existing IT environment.

Amazon Web Services (AWS) and Microsoft Azure are two of the leading cloud computing platforms, offering a wide range of services for organizations. AWS, provided by Amazon, is known for its comprehensive suite of services, global infrastructure, and extensive ecosystem. It offers a vast selection of services for computing power, storage, databases, networking, artificial intelligence, and more. Microsoft Azure, on the other hand, is a cloud platform provided by Microsoft, integrating well with Microsoft's existing technology stack. It offers a broad set of services, including computing, storage, analytics, AI, and developer tools, and provides seamless integration with popular Microsoft tools like Windows Server and SQL Server.

See also: Top 10 Public Cloud Platforms

See also: Top 10 Public Cloud Platforms

Amazon Web Services vs Microsoft Azure in our news:

2023. ChatGPT comes to Microsoft Azure

Microsoft has officially announced the general availability of ChatGPT, which can now be accessed through the Azure OpenAI Service. This service, tailored for enterprises, offers a fully managed platform with additional governance and compliance features to ensure businesses can leverage OpenAI's technologies effectively. ChatGPT now joins a lineup of other OpenAI-developed systems already provided via the Azure OpenAI Service, including the text-generating GPT-3.5, code-generating Codex, and image-generating DALL-E 2. Microsoft maintains a strong collaborative partnership with OpenAI, marked by substantial investments and an exclusive agreement to commercialize OpenAI's AI research.

2021. Microsoft launches Azure Container Apps, a new serverless container service

Microsoft has announced the preview launch of Azure Container Apps, a new serverless container service that is fully managed and complements their existing container infrastructure services such as Azure Kubernetes Service (AKS). Azure Container Apps is designed for microservices and offers rapid scaling based on HTTP traffic, events, or long-running background jobs. It shares similarities with AWS App Runner, one of Amazon's serverless container services focused on microservices. Google also provides container-centric services, including Cloud Run, which is their serverless platform for running container-based applications.

2020. AWS launches Amazon AppFlow, its new SaaS integration service

AWS has recently launched Amazon AppFlow, an integration service designed to simplify data transfer between AWS and popular SaaS applications such as Google Analytics, Marketo, Salesforce, ServiceNow, Slack, Snowflake, and Zendesk. Similar to competing services like Microsoft Azure's Power Automate, developers can configure AppFlow to trigger data transfers based on specific events, predetermined schedules, or on-demand requests. Unlike some competitors, AWS positions AppFlow primarily as a data transfer service rather than an automation workflow tool. While the data flow can be bidirectional, AWS's emphasis is on moving data from SaaS applications to other AWS services for further analysis. To facilitate this, AppFlow includes various tools for data transformation as it passes through the service.

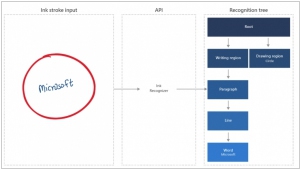

2019. Microsoft launched cloud APIs for form and handwriting recognition

Microsoft has unveiled a range of new cognitive services on its Azure Machine Learning cloud platform, catering to various document-related needs and offering enhanced capabilities. Among these services are the Ink Recognizer and Form Recognizer, which enable the conversion of handwritten text and paper documents into digital text and data. Additionally, the Conversation Transcription service facilitates the transformation of phone dialogues into text, with the ability to identify the author of each phrase. Another notable addition is the Personalizer service, which delivers personalized recommendations to website and online store visitors based on their behavioral patterns. Furthermore, Microsoft has introduced a user-friendly visual interface for creating machine learning models, empowering marketers and other professionals to engage with machine learning. By simply uploading the database and specifying the desired predictive parameter, users can explore the potential of machine learning in their respective fields.

2019. Microsoft launched own Windows Virtual Desktop service

Microsoft has recognized the potential of offering virtual desktop services independently, despite its prior reliance on cloud partners. The introduction of the Windows Virtual Desktop service, now accessible for companies on the Microsoft Azure cloud platform, allows the installation of Windows, Office, and other software licenses on the cloud instead of employees' individual computers. Consequently, employees can access and work with their software through a virtual desktop. This approach offers several advantages. Firstly, it enables even older Windows 7 computers to operate efficiently while providing the benefits of Windows 10. Secondly, it offers convenience to administrators in terms of creating and maintaining new work environments while ensuring security measures. The Windows Virtual Desktop service itself is free, with costs incurred only for additional Azure resources consumed, such as memory and CPU time.

2019. AWS launches fully-managed backup service for business

Amazon's cloud platform, AWS, has introduced a new service called Backup, allowing companies to securely back up their data from various AWS services as well as their on-premises applications. For on-premises data backup, businesses can utilize the AWS Storage Gateway. This service enables users to define backup policies and retention periods according to their specific requirements. It includes options such as transferring backups to cold storage for EFS data or deleting them entirely after a specified duration. By default, the data is stored in Amazon S3 buckets. While most of the supported services already offer snapshot creation capabilities (except for EFS file systems), Backup automates this process and adds customizable rules to enhance data protection. Notably, the pricing for Backup aligns with the costs associated with using the snapshot features (except for file system backup, which incurs a per-GB charge).

2018. Microsoft Azure gets new high-performance storage options

Microsoft is introducing a range of new storage options for Microsoft Azure, with a particular focus on scenarios that require high disk performance. One notable addition is the public preview of Azure Ultra SSD Managed Disks. These drives are designed to deliver extremely low latency, making them well-suited for workloads that demand quick response times. Additionally, Standard SSD Managed Disks have transitioned from preview to general availability within just three months. Moreover, Azure now offers expanded storage capacities of 8, 16, and 32 TB across all storage tiers, including Premium and Standard SSD, as well as Standard HDD. Another new addition is Azure Premium Files, which is currently in the preview stage. This service is also SSD-based and aims to provide improved throughput and reduced latency for SMB operations within Azure Files, a familiar cloud storage solution that uses the standard SMB protocol.

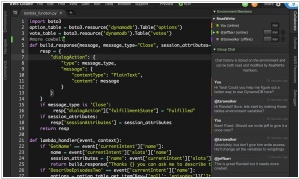

2017. AWS launched browser-based IDE for cloud developers

Amazon Web Services has introduced a new browser-based Integrated Development Environment (IDE) called AWS Cloud9. While it shares similarities with other IDEs and editors like Sublime Text, AWS emphasizes its collaborative editing capabilities and deep integration into the AWS ecosystem. The IDE includes built-in support for various programming languages such as JavaScript, Python, PHP, and more. Cloud9 also provides pre-installed debugging tools. AWS positions this as the first "cloud native" IDE, although competitors may contest this claim. Regardless, Cloud9 offers seamless integration with AWS, enabling developers to create cloud environments and launch new instances directly from the tool.

2017. AWS introduced per-second billing for EC2 instances

In recent years, several alternative cloud platforms have shifted towards more flexible billing models, primarily adopting per-minute billing. However, AWS is taking it a step further by introducing per-second billing for its Linux-based EC2 instances. This new billing model applies to on-demand, reserved, and spot instances, as well as provisioned storage for EBS volumes. Furthermore, both Amazon EMR and AWS Batch are transitioning to this per-second billing structure. It is important to note that there is a minimum charge of one minute per instance, and this change does not affect Windows machines or certain Linux distributions that have their own separate hourly charges.

2017. AWS offers a virtual machine with over 4TB of memory

Amazon's AWS has introduced its largest EC2 machine yet, the x1e.32xlarge instance, boasting an impressive 4.19TB of RAM. This represents a significant upgrade from the previous largest EC2 instance, which offered just over 2TB of memory. These machines are equipped with quad-socket Intel Xeon processors operating at 2.3 GHz, up to 25 Gbps of network bandwidth, and two 1,920GB SSDs. It is evident that only a select few applications require this level of memory capacity. Consequently, these instances have obtained certification for running SAP's HANA in-memory database and its associated tools, with SAP offering direct support for deploying these applications on these instances. It's worth mentioning that Microsoft Azure's largest memory-optimized machine currently reaches just over 2TB of RAM, while Google's maximum memory capacity caps at 416GB.