Apache Spark vs Hadoop

May 26, 2023 | Author: Michael Stromann

18

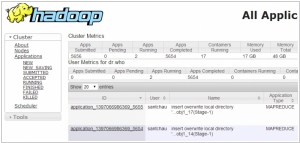

The Apache Hadoop software library is a framework that allows for the distributed processing of large data sets across clusters of computers using simple programming models. It is designed to scale up from single servers to thousands of machines, each offering local computation and storage. Rather than rely on hardware to deliver high-availability, the library itself is designed to detect and handle failures at the application layer, so delivering a highly-available service on top of a cluster of computers, each of which may be prone to failures.

Apache Spark and Hadoop are both popular distributed data processing frameworks, but they have key differences in terms of performance, ease of use, and processing capabilities.

Apache Spark is an open-source data processing and analytics engine designed for speed and efficiency. It provides in-memory processing capabilities, allowing it to perform computations much faster than traditional disk-based systems like Hadoop. Spark offers a rich set of APIs and libraries for various data processing tasks, including batch processing, real-time streaming, machine learning, and graph processing. Its ability to cache data in memory and perform distributed processing makes it well-suited for iterative algorithms and complex analytics workloads.

Hadoop, on the other hand, is an open-source ecosystem of tools and frameworks that provides distributed storage and processing capabilities for big data. At the core of Hadoop is the Hadoop Distributed File System (HDFS), which enables the distributed storage of large datasets across multiple commodity hardware. Hadoop utilizes the MapReduce framework for parallel processing of data, making it suitable for batch processing and large-scale data analytics.

The key difference between Apache Spark and Hadoop lies in their processing models and performance characteristics. Spark excels in speed and efficiency due to its in-memory processing capabilities, making it well-suited for real-time processing and iterative algorithms. Hadoop, on the other hand, is designed for handling massive amounts of data and excels in batch processing scenarios.

See also: Top 10 Big Data platforms

Apache Spark is an open-source data processing and analytics engine designed for speed and efficiency. It provides in-memory processing capabilities, allowing it to perform computations much faster than traditional disk-based systems like Hadoop. Spark offers a rich set of APIs and libraries for various data processing tasks, including batch processing, real-time streaming, machine learning, and graph processing. Its ability to cache data in memory and perform distributed processing makes it well-suited for iterative algorithms and complex analytics workloads.

Hadoop, on the other hand, is an open-source ecosystem of tools and frameworks that provides distributed storage and processing capabilities for big data. At the core of Hadoop is the Hadoop Distributed File System (HDFS), which enables the distributed storage of large datasets across multiple commodity hardware. Hadoop utilizes the MapReduce framework for parallel processing of data, making it suitable for batch processing and large-scale data analytics.

The key difference between Apache Spark and Hadoop lies in their processing models and performance characteristics. Spark excels in speed and efficiency due to its in-memory processing capabilities, making it well-suited for real-time processing and iterative algorithms. Hadoop, on the other hand, is designed for handling massive amounts of data and excels in batch processing scenarios.

See also: Top 10 Big Data platforms

Apache Spark vs Hadoop in our news:

2015. IBM bets on big data Apache Spark project

IBM has made a significant announcement regarding its involvement in the open source big data project Apache Spark. The company plans to allocate a team of 3,500 researchers to this initiative. Additionally, IBM has unveiled its decision to open source its own IBM SystemML machine learning technology. These strategic moves are aimed at positioning IBM as a frontrunner in the domains of big data and machine learning. Cloud, big data, analytics, and security form the pillars of IBM's transformation strategy. In conjunction with this announcement, IBM has committed to integrating Spark into its core analytics products and partnering with Databricks, the commercial entity established to support the open source Spark project. IBM's participation in these endeavors goes beyond mere altruism. By actively engaging with the open source community, IBM aims to establish itself as a trusted contributor in the realm of big data. This, in turn, enhances its credibility among companies working on big data and machine learning projects using open source tools. The collaborative involvement with the community opens doors for IBM to offer consulting services and seize other business opportunities in this space.

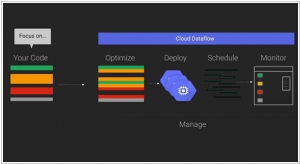

2015. Google partners with Cloudera to bring Cloud Dataflow to Apache Spark

Google has announced a collaboration with Cloudera, the Hadoop specialists, to integrate its Cloud Dataflow programming model into Apache's Spark data processing engine. By bringing Cloud Dataflow to Spark, developers gain the ability to create and monitor data processing pipelines without the need to manage the underlying data processing cluster. This service originated from Google's internal tools for processing large datasets at a massive scale on the internet. However, not all data processing tasks are identical, and sometimes it becomes necessary to run tasks in different environments such as the cloud, on-premises, or on various processing engines. With Cloud Dataflow, data analysts can utilize the same system to create pipelines, regardless of the underlying architecture they choose to deploy them on.

2014. MapR partners with Teradata to reach enterprise customers

The last remaining independent Hadoop provider, MapR, and the prominent big data analytics provider, Teradata, have joined forces to collaborate on integrating their respective products and developing a unified go-to-market strategy. As part of this partnership, Teradata gains the ability to resell MapR software, professional services, and provide customer support. Essentially, Teradata will act as the primary interface for enterprises that utilize or aspire to use both technologies, serving as the representative for MapR. Previously, Teradata had established a close partnership with Hortonworks, but it now extends its collaboration and analytic market leadership to all three major Hadoop providers. Similarly, earlier this week, HP unveiled Vertica for SQL on Hadoop, enabling users to access and analyze data stored in any of the three primary Hadoop distributions—Hortonworks, MapR, and Cloudera.

2014. HP plugs the Vertica analytics platform into Hadoop

HP has unveiled the introduction of Vertica for SQL on Hadoop, a significant announcement in the world of analytics. With Vertica, customers gain the ability to access and analyze data stored in any of the three primary Hadoop distributions: Hortonworks, MapR, and Cloudera, as well as any combination thereof. Given the uncertainty surrounding the dominance of a particular Hadoop flavor, many large companies opt to utilize all three. HP stands out as one of the pioneering vendors by asserting that "any flavor of Hadoop will do," a sentiment further reinforced by its $50 million investment in Hortonworks, which currently represents the favored Hadoop flavor within HAVEn, HP's analytics stack. HP's announcement not only emphasizes the platform's interoperability but also highlights its capabilities in dealing with data stored in diverse environments such as data lakes or enterprise data hubs. With HP Vertica, organizations gain a seamless solution for exploring and harnessing the value of data stored in the Hadoop Distributed File System (HDFS). The combination of Vertica's power, speed, and scalability with Hadoop's prowess in handling extensive data sets serves as an enticing proposition, potentially motivating hesitant managers to embrace big data initiatives confidently. HP's comprehensive offering provides a compelling avenue for organizations to unlock the potential of their data, urging them to venture beyond their reservations and embrace the world of big data.

2014. Cloudera helps to manage Hadoop on Amazon cloud

Hadoop vendor Cloudera has unveiled a new offering named Director, aimed at simplifying the management of Hadoop clusters on the Amazon Web Services (AWS) cloud. Clarke Patterson, Senior Director of Product Marketing, acknowledged the challenges faced by customers in managing Hadoop clusters while maintaining extensive capabilities. He emphasized that there is no difference between the cloud version and the on-premises version of the software. However, the Director interface has been specifically designed to be self-service, incorporating cloud-specific features like instance-tracking. This enables administrators to monitor the cost associated with each cloud instance, ensuring better cost management.