Apache Spark vs Google Cloud Dataflow

June 03, 2023 | Author: Michael Stromann

Apache Spark and Google Cloud Dataflow are both powerful distributed data processing frameworks, but they have some key differences in terms of their architecture and deployment options.

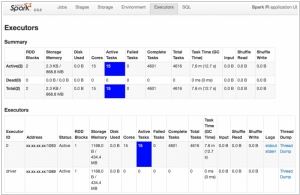

Apache Spark is an open-source framework that provides an in-memory data processing engine and a wide range of libraries for batch processing, streaming analytics, machine learning, and graph processing. Spark's core abstraction is the resilient distributed dataset (RDD), which allows for efficient distributed data processing across a cluster of machines. Spark provides a flexible programming model, supporting multiple languages such as Scala, Java, and Python, and offers integration with various data sources and storage systems. It can be deployed on-premises or in the cloud, providing flexibility and control over the infrastructure.

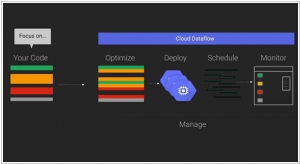

Google Cloud Dataflow, on the other hand, is a fully managed cloud service offered by Google Cloud Platform (GCP). It provides a serverless and scalable way to process both batch and stream data using a unified programming model. Dataflow is based on the Apache Beam framework, which allows developers to write data processing pipelines in a variety of programming languages, including Java, Python, and Go. Dataflow automatically handles the provisioning and scaling of resources, making it easy to run data processing jobs without the need for managing infrastructure.

While both Spark and Dataflow offer similar capabilities for data processing, their deployment models differ significantly. Spark provides more flexibility by allowing deployments on various infrastructure options, including on-premises clusters, cloud platforms, and hybrid environments. Dataflow, on the other hand, simplifies the deployment process by offering a fully managed service in the cloud, abstracting away the infrastructure management overhead.

See also: Top 10 Big Data platforms

Apache Spark is an open-source framework that provides an in-memory data processing engine and a wide range of libraries for batch processing, streaming analytics, machine learning, and graph processing. Spark's core abstraction is the resilient distributed dataset (RDD), which allows for efficient distributed data processing across a cluster of machines. Spark provides a flexible programming model, supporting multiple languages such as Scala, Java, and Python, and offers integration with various data sources and storage systems. It can be deployed on-premises or in the cloud, providing flexibility and control over the infrastructure.

Google Cloud Dataflow, on the other hand, is a fully managed cloud service offered by Google Cloud Platform (GCP). It provides a serverless and scalable way to process both batch and stream data using a unified programming model. Dataflow is based on the Apache Beam framework, which allows developers to write data processing pipelines in a variety of programming languages, including Java, Python, and Go. Dataflow automatically handles the provisioning and scaling of resources, making it easy to run data processing jobs without the need for managing infrastructure.

While both Spark and Dataflow offer similar capabilities for data processing, their deployment models differ significantly. Spark provides more flexibility by allowing deployments on various infrastructure options, including on-premises clusters, cloud platforms, and hybrid environments. Dataflow, on the other hand, simplifies the deployment process by offering a fully managed service in the cloud, abstracting away the infrastructure management overhead.

See also: Top 10 Big Data platforms

Apache Spark vs Google Cloud Dataflow in our news:

2015. IBM bets on big data Apache Spark project

IBM has made a significant announcement regarding its involvement in the open source big data project Apache Spark. The company plans to allocate a team of 3,500 researchers to this initiative. Additionally, IBM has unveiled its decision to open source its own IBM SystemML machine learning technology. These strategic moves are aimed at positioning IBM as a frontrunner in the domains of big data and machine learning. Cloud, big data, analytics, and security form the pillars of IBM's transformation strategy. In conjunction with this announcement, IBM has committed to integrating Spark into its core analytics products and partnering with Databricks, the commercial entity established to support the open source Spark project. IBM's participation in these endeavors goes beyond mere altruism. By actively engaging with the open source community, IBM aims to establish itself as a trusted contributor in the realm of big data. This, in turn, enhances its credibility among companies working on big data and machine learning projects using open source tools. The collaborative involvement with the community opens doors for IBM to offer consulting services and seize other business opportunities in this space.

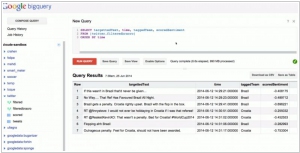

2015. Google partners with Cloudera to bring Cloud Dataflow to Apache Spark

Google has announced a collaboration with Cloudera, the Hadoop specialists, to integrate its Cloud Dataflow programming model into Apache's Spark data processing engine. By bringing Cloud Dataflow to Spark, developers gain the ability to create and monitor data processing pipelines without the need to manage the underlying data processing cluster. This service originated from Google's internal tools for processing large datasets at a massive scale on the internet. However, not all data processing tasks are identical, and sometimes it becomes necessary to run tasks in different environments such as the cloud, on-premises, or on various processing engines. With Cloud Dataflow, data analysts can utilize the same system to create pipelines, regardless of the underlying architecture they choose to deploy them on.